Written By Dr. Aisha Qayum | Follow Aisha on Instagram & Tiktok!

It’s 9:30 am on a busy ward round.

A patient looks at me and asks a simple question:

“Doctor, how sure are you about this diagnosis?”

I pause for a moment. I’ve reviewed their blood results, examined them, and thought through my differentials. But I’ve also used a digital tool that flagged a high-risk pattern, something subtle I might have otherwise overlooked.

For a second, I hesitate.

Do I mention it?

Do I say an AI-supported tool suggested this?

Or do I present it as entirely my own clinical judgment?

This is something I’ve started to notice more and more as a newly graduated doctor. Artificial intelligence (AI) isn’t a distant idea anymore—it’s slowly becoming part of everyday clinical practice, including within the NHS. From supporting imaging interpretation to highlighting clinical risks, these tools are beginning to shape how we think and make decisions.

But one question isn’t talked about enough: How do we actually communicate AI-supported conclusions in a way that is safe, clear, and honest?

Because at the end of the day, patients don’t hear “AI-assisted decision.” They hear your judgment.

The trust gap: where communication matters most

AI in healthcare sits in an interesting space. On one hand, it has the potential to improve efficiency and support better decisions. On the other, it can feel unfamiliar—both for patients and for doctors early in their careers.

From what I’ve seen, most patients are comfortable with technology being used in the background, but not as a replacement for the doctor in front of them. And that’s where communication becomes important.

There’s a balance we have to get right:

- If we overemphasise AI, it can sound like we’re handing over responsibility

- If we ignore it completely, we risk not being transparent

As resident doctors, we’re often navigating this balance while still building our own confidence.

Communicating with patients: keep it simple and human

Patients don’t need to understand how an algorithm works. What they really want to know is: what does this mean for me?

Instead of saying:

- “An AI model suggests a high probability of pulmonary embolism.”

I’ve found it’s much clearer to say:

- “We used a tool that helps us analyse patterns in your results. It suggests there may be a clot, so we’re going to investigate this further.”

It keeps the explanation simple, and more importantly, it keeps the focus on the plan.

A good way to think about it is: Translate the insight, not the technology.

Communicating uncertainty: don’t pass the responsibility

One thing I’ve become more aware of is how easy it is to lean on technology—especially when you’re still gaining confidence.

But wording matters.

Saying things like:

- “The AI says…”

- “The system thinks…”

can unintentionally shift responsibility away from us.

What feels more appropriate is something like:

- “Based on your symptoms, examination, and the tools we use to support our decisions, I think…”

It still acknowledges the support—but makes it clear that the decision is yours. Because ultimately, it is.

Communicating with peers: openness goes a long way

With colleagues, the conversation feels slightly different.

AI is already being used in different parts of clinical work, but not everyone uses it in the same way—and not everyone is fully comfortable with it yet.

I’ve found that being open helps:

- mentioning that I used a tool

- briefly explaining what it highlighted

- asking others what they think

It turns it into a discussion, rather than something to either rely on completely or avoid mentioning altogether.

Communicating with supervisors: show your thinking first

When presenting to seniors, I’ve realised it’s less about whether you used AI, and more about how you present your reasoning.

For example:

- “I think this is pancreatitis based on the clinical picture, and I used a support tool which highlighted similar features.”

This feels very different to:

- “AI suggested pancreatitis.”

The first shows that you’ve thought it through. The second can sound like you’re relying on the tool. And at this stage of training, demonstrating your thinking is what really matters.

Being transparent with patients

There’s still a lot of discussion around how much patients should be told about AI use. But from what I’ve seen, small amounts of transparency can actually build trust.

Something as simple as:

- “We sometimes use digital tools to support our decisions, but your care is always reviewed by a doctor.” can be reassuring. It makes it clear that technology is involved—but that it hasn’t replaced human judgement.

What AI still can’t do

AI can be incredibly useful. It can process large amounts of information quickly and highlight patterns that might not be obvious at first glance.

But there are things it simply can’t replace.

- It can’t sit with a patient who’s anxious.

- It can’t pick up on subtle emotional cues.

- And it can’t take responsibility for decisions.

That still sits with us.

A simple way to approach it

Over time, I’ve started to think of communicating AI-supported decisions in a simple way:

- Start with your clinical reasoning

- Mention AI only as support, if needed

- Explain what it means for the patient

- Be clear about your plan

It’s not complicated—but it helps keep communication clear and consistent.

Final thoughts

AI is gradually becoming part of everyday medicine, and as resident doctors, we’re learning how to work alongside it in real time.

But while technology is evolving quickly, one thing hasn’t changed:

Patients are not placing their trust in algorithms.

They are placing it in us.

And that means how we communicate—especially when AI is involved—matters just as much as the decision itself.

Discover Trusted AI resources!

Elsevier provides enhanced learning solutions you can trust, powered by responsible AI! Searching only Elsevier curated content, not the open web. Click here to check if you have access to Elsevier AI resources at your institution!

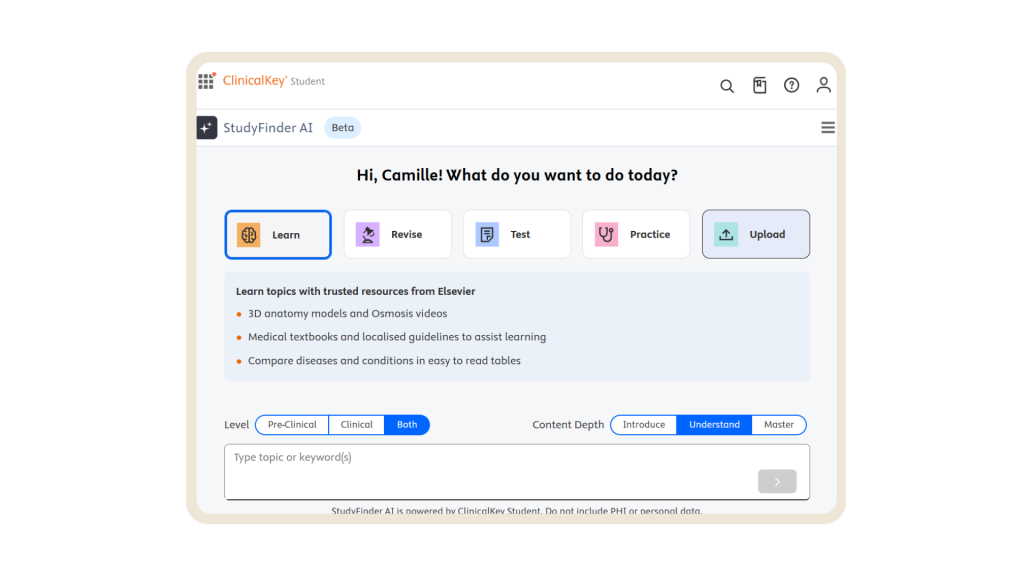

Studyfinder AI, enhancement to ClinicalKey Student designed to help you find what you need faster

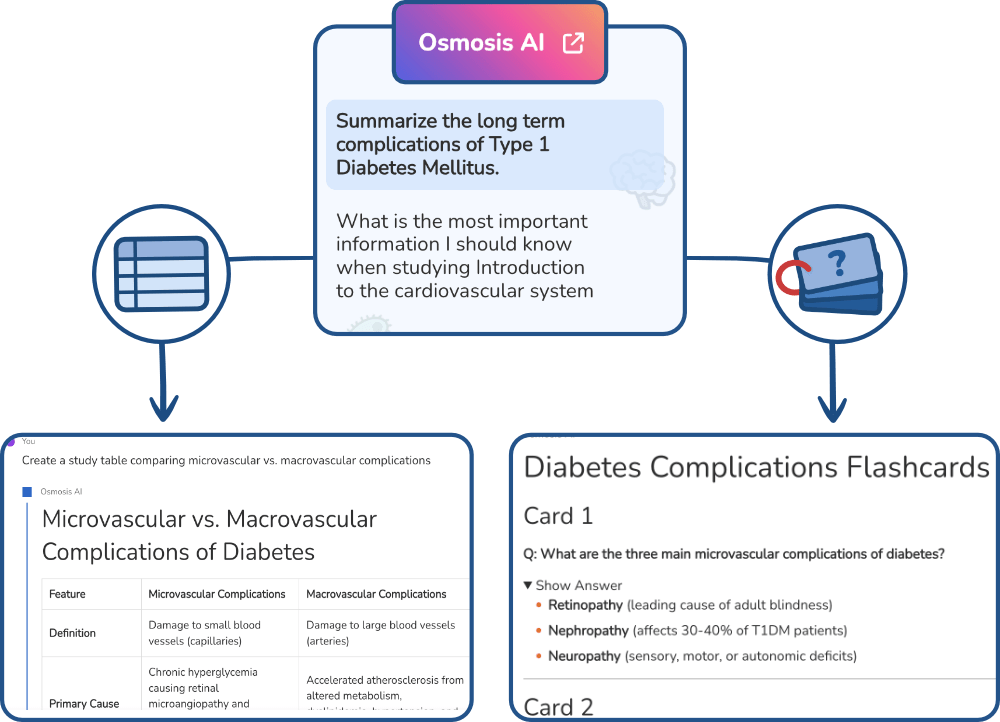

Osmosis AI, conversational study companion designed to help you make sense of the most complex topics

Leave a Reply