Written by: Hussain Hilali, Resident Doctor | Follow Hussain on Instagram & TikTok

“Artificial intelligence is revolutionising medical education by empowering learners to optimise their academic journey through personalised and adaptive support.”

Sounds impressive, right?

It also sounds like the sort of sentence you’d read, vaguely agree with, and then forget about 10 seconds later. And that’s kind of the point. AI is already very good at producing writing that sounds polished, confident, and vaguely smart, even when it’s bland, inaccurate, or both.

That matters because AI is already everywhere in education. Most medical students have probably used it in some form already, whether that’s to explain a topic, summarise notes, make flashcards, plan revision, draft something, or just help them get started when they can’t be bothered.

So this isn’t really a debate about whether medical students should be using AI or whether it’s “the future”. It’s already here. It’s already in people’s workflow.

The more useful question is this:

How do you use AI in a way that helps you, without quietly diminishing your ability to think for yourself?

Let’s be honest about why students use it

Because it’s useful.

Medical school isn’t easy. There’s too much content, too little time, and a lot of what makes revision hard isn’t even the difficulty of the material itself, it’s the sheer quantity of what’s out there, and recognising what’s useful to learn without falling down the rabbit hole of specialist knowledge.

You’ve got lecture slides that are awful, notes that are all over the place, and a revision list so long that even figuring out where to start feels draining.

That’s where AI can genuinely help.

Used properly, it can help you:

- Simplify a topic when the textbook explanation is painful

- Turn your notes into flashcards or quiz questions

- Generate mock OSCE stations

- Compare similar conditions side by side

- Rewrite your notes into a cleaner structure

- Help you make a revision plan when you feel overwhelmed

- Explain something at different levels depending on how much depth you want

That’s the real appeal. It saves time, reduces friction, and makes it easier to get going.

The problem starts when it stops being a tool for efficiency and starts becoming a substitute for understanding.

The best way to think about AI

The safest mindset is probably this:

AI is a fast assistant, not a trusted authority.

That sounds obvious, but loads of people don’t actually use it that way. They use it more like an invisible tutor who must know what it’s talking about because the answer sounds clean and well-written.

That’s exactly where people get caught out.

AI can give you a genuinely useful explanation. It can also give you complete nonsense wrapped up in a tonne of absolute confidence. And annoyingly, when you’re tired or rushing, those two things can look very similar.

That’s why you can’t treat it like a lecturer, a guideline, or a proper textbook. It can help you, but it’s not accountable. That part is still on you.

How AI can improve your revision

This is where I think students can get the most value from it, as long as they use it deliberately.

1. Use it to get unstuck, not to avoid the work

One of the best uses of AI is when you’re stuck at the start.

Maybe you don’t understand nephrotic versus nephritic syndrome. Maybe endocrine physiology is frying your brain. Maybe you’ve got a pile of lecture notes and no clear way into the topic.

Instead of asking for the final answer, ask for a useful first pass.

For example:

- “Explain this simply first, then build up the detail”

- “Compare these two conditions in a table”

- “Give me the three highest-yield differences”

- “Test me on this one question at a time”

- “Explain why this is wrong rather than just giving me the right answer”

That’s a much better use of it than just saying “summarize this” and passively reading whatever comes out.

2. Use it for active recall, not fake productivity

Reading AI summaries can feel productive, but a lot of the time it’s just passive consumption dressed up as revision. You’re still just reading.

A better use is to make AI generate things that force you to think, like:

- Short answer questions

- SBA-style questions

- OSCE scenarios

- Flashcards

- “What would you do next?” questions

- Common traps or examiner-style pitfalls

That turns it into a revision tool rather than just a machine that hands you neat-looking notes.

3. Use it to improve structure

AI is often more useful for structure than for truth.

What I mean by that is it can be really good at helping you organise things:

- Turn messy notes into headings

- Make a revision checklist

- Draft a timetable

- Improve the flow of your own writing

- Break a big task into smaller chunks

- Help you plan an essay or presentation

That’s very different from asking it to think for you.

4. Use it to challenge your thinking

One of the better ways to use AI is to make it push back on you.

Ask things like:

- “What’s weak about this answer?”

- “What would an examiner criticise here?”

- “What are the common traps in this topic?”

- “Argue the other side”

- “What assumption am I making here that might be wrong?”

That’s useful because it keeps you engaged. It makes you defend your thinking rather than just absorb information.

And that’s usually where the actual learning happens.

Some pitfalls of AI

1. Trusting confident language too easily

AI often sounds clever even when it’s wrong. That’s what makes it dangerous. If it constantly gave obviously ridiculous answers, nobody would trust it. The problem is that a lot of the time it gets things nearly right, which is much more convincing.

And in medicine, “nearly right” can still be wrong enough to matter.

So if you’re using AI for facts, guidelines, drug information, references, management, or anything clinical, you need to check it properly.

Because “that sounds about right” is not a safe standard.

2. Letting it flatten your critical thinking

This is a bit more subtle, but I think it matters just as much.

If every time you hit friction you immediately offload the task to AI, your revision might feel smoother, but your brain is doing less of the work. And unfortunately, the hard bit is often the bit that actually makes you learn.

Medicine is not just recall. It’s judgement, reasoning, prioritisation, and being able to think clearly when things are uncertain.

If AI starts doing too much of that for you, you might become faster, but you may also become weaker.

That’s not a great trade.

3. Using it for coursework in a way that stops being yours

There’s a difference between:

- Using AI to improve clarity

- Using it to suggest structure

- Using it to help you brainstorm

- Using it to write the whole thing

Students blur that line all the time.

And the issue isn’t just academic honesty, it’s that the writing often stops sounding like an actual person. Reflective writing especially isn’t supposed to sound like a robot with a LinkedIn account.

If it no longer sounds like your thinking, then at some point it stops really being your work.

4. Putting confidential information into it

This should be obvious, but it still needs saying.

Do not paste identifiable patient information into public AI tools.

No names. No dates of birth. No hospital numbers. No screenshots with visible details. No copying clinic letters in and asking it to tidy them up.

Just because a tool feels secure doesn’t mean the rules suddenly disappear. Professionalism still applies.

5. Using AI-generated references without checking them properly

This is one of the things that worries me most, especially in academia.

AI can produce references that look completely believable. Titles sound real. Journals sound plausible. Authors look right. Sometimes you could easily skim past them and assume they’re genuine.

But when AI-written work includes an error and that work gets shared, cited, or even published, the problem doesn’t stay contained. It spreads.

Someone reads it and repeats it. Someone cites it. Someone else assumes that because it appears in a written piece, it must have been checked. Then that same bad point starts creeping into other work.

That’s how the quality of the literature gets dragged down over time, not always through huge obvious fraud, but through small believable bits of rubbish getting recycled until they start looking legitimate.

That’s probably one of the more dangerous things about AI in academia. It doesn’t just create wrong answers, it can help wrong answers travel.

A few rules I’d recommend

If I had to reduce all of this to something practical, it’d be this.

Use AI for:

- Simplifying difficult concepts

- Making revision more active

- Improving structure

- Generating practice questions

- Getting a starting point when you’re stuck

- Helping you spot gaps in your understanding

Don’t use AI for:

- Final factual checking

- Guidelines without verification

- References you haven’t checked yourself

- Reflective writing that’s meant to be personal

- Anything involving patient-identifiable information

- Replacing the thinking bit of revision

And honestly, that last one is probably the most important.

Don’t let AI remove the part where you actually have to think.

Because that part is the whole point.

Final thought

AI isn’t going away, and pretending it’s not already changing education would be naive.

The students who benefit most from it won’t be the ones who use it for everything. They’ll be the ones who use it properly.

That means using it to make revision sharper, workflow quicker, and learning more efficient, without letting it replace your judgement.

Because in medicine, polished nonsense is still nonsense.

And that’s a dangerous thing to get too comfortable with.

Discover Trusted AI resources!

Elsevier provides enhanced learning solutions you can trust, powered by responsible AI! Searching only Elsevier curated content, not the open web. Click here to check if you have access to Elsevier AI resources at your institution!

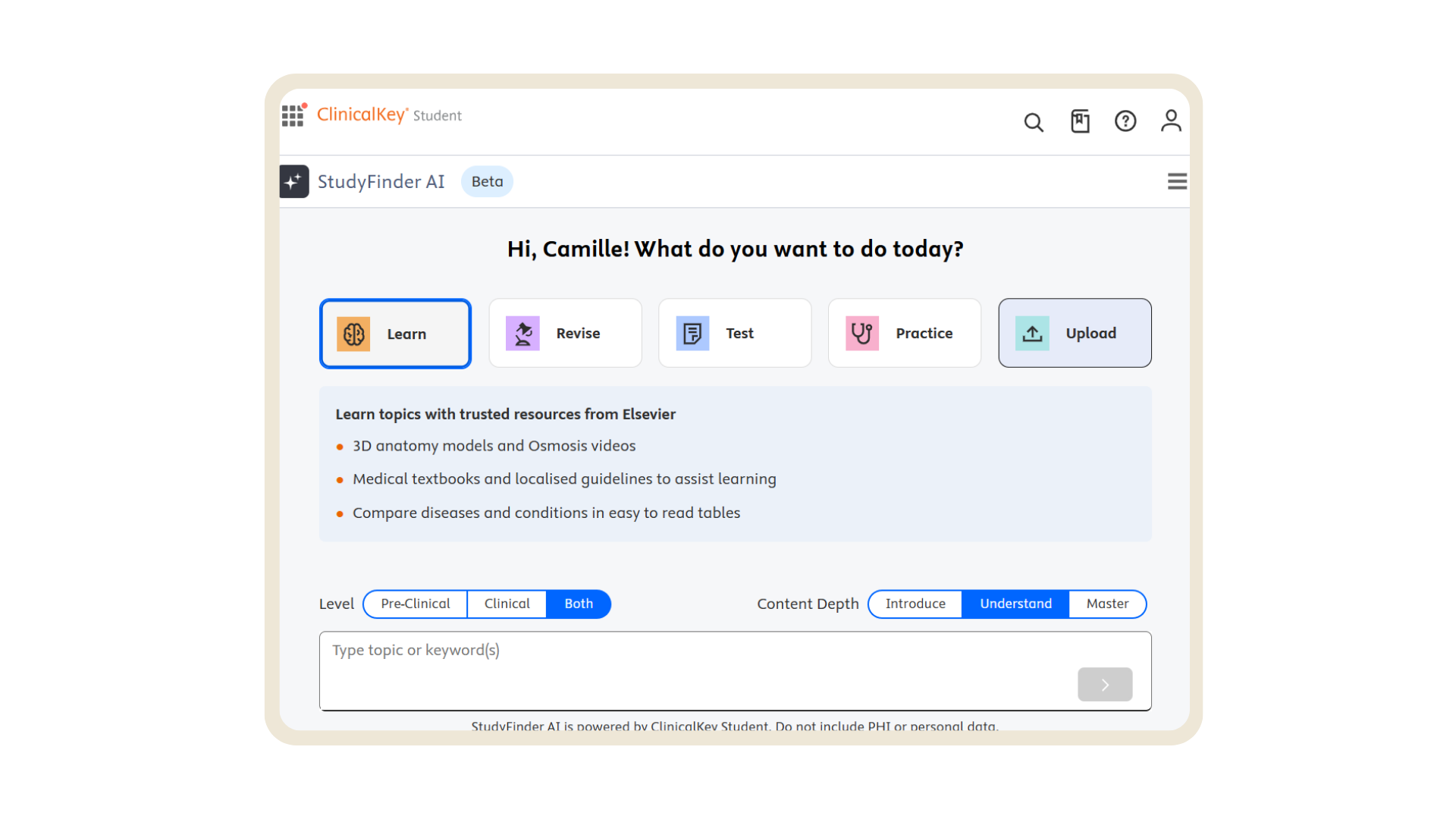

Studyfinder AI, enhancement to ClinicalKey Student designed to help you find what you need faster

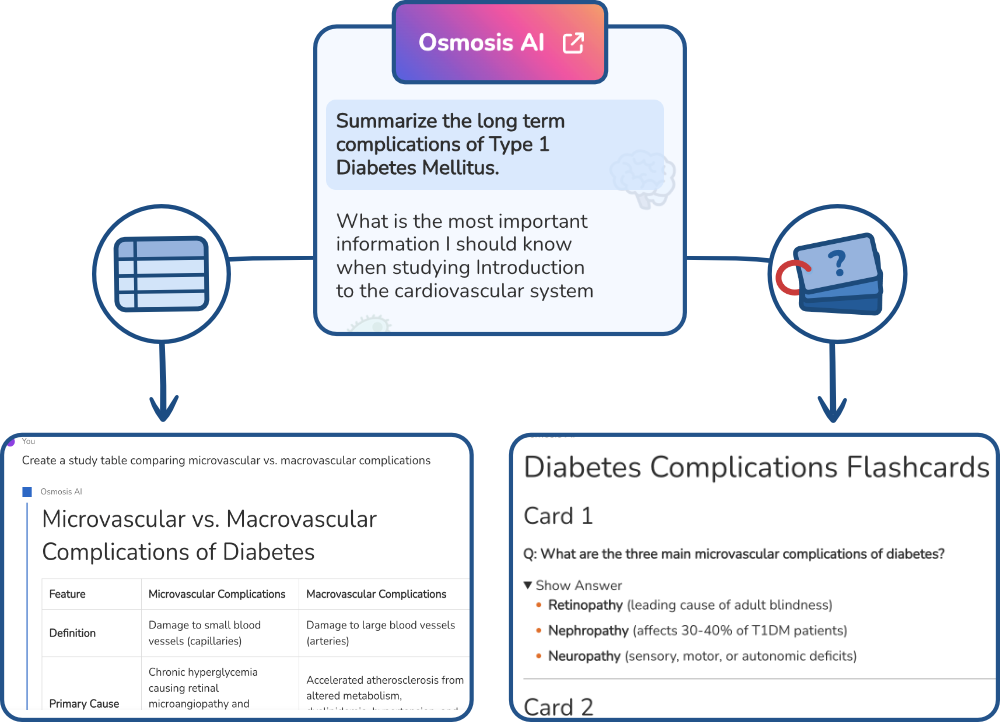

Osmosis AI, conversational study companion designed to help you make sense of the most complex topics

Leave a Reply